Our paper on gesture based VR animation system is appearing to UIST 2019. Huge congratulations to Rahul Arora and my amazing collaborators. I’m very excited about this work and looking forward to its impact in future AR/VR design tools. It explores new avenues in the intersection of mid-air hand gestures and 3d animation.

—————————————————————————————-

Hand Gestures

Hand gestures are a ubiquitous tool for human-to-human communication, often employed in conjunction with or as an alternative to verbal interaction. They are the physical expression of mental concepts, thus augmenting our communication capabilities beyond speech. Mid-air gestures allow users to form hand shapes they would naturally use when interacting with real-world objects, thus exploiting users’ knowledge and experience with physical devices.

VR Animation

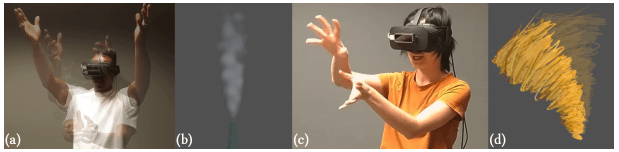

Our work investigates the application of gestures to the emerging domain of VR animation, a compelling new medium whose immersive nature provides a natural setting for gesture-based authoring. Current VR animation tools are primarily dominated by controller-based direct manipulation techniques, and do not fully leverage the breadth of interaction techniques and expressiveness afforded by hand gestures. We explore how gestural interactions can be used to specify and control various spatial and temporal properties of dynamic, physical phenomena in VR.

Gesture Elicitation Study

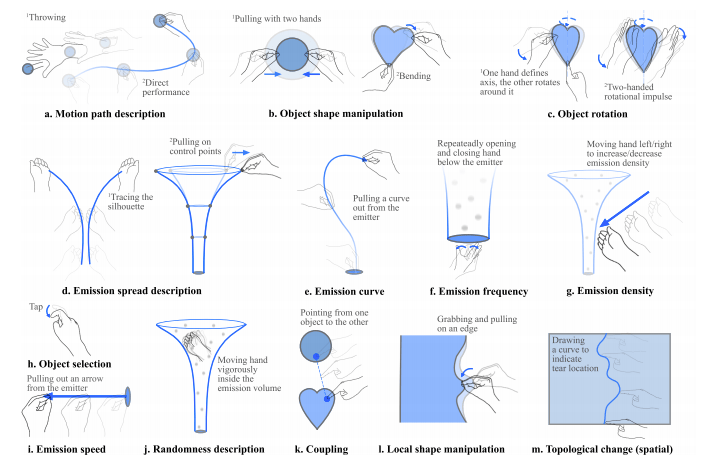

We first perform a gesture elicitation study to understand user preferences for a spatiotemporal, bare-handed interaction system in VR. Specifically, we focus on creating and editing dynamic, physical phenomena (e.g., particle systems, deformations, coupling), where the mapping from gestures to animation is ambiguous and indirect.

Design Guidelines

From our findings, we propose several design guidelines for future, gesture-based, VR animation authoring tools. See the paper for more details.

MagicalHands system

As a proof of concept, we implemented a system, MagicalHands, consisting of 11 of our most commonly observed interaction techniques. Our implementation leverages hand pose information to perform direct manipulation and abstract demonstrations for animating in VR.

Publication:

MagicalHands: Mid-air Hand Gestures for Animating in VR

R Arora, RH Kazi, D Kaufman, W Li, K Singh

Paper | Project Page